A survey by SmartBear found that 43% of teams describe their test automation suite as a “significant source of pain” rather than a confidence-builder. That’s nearly half of all teams with automation fighting the very thing they built to help them ship faster.

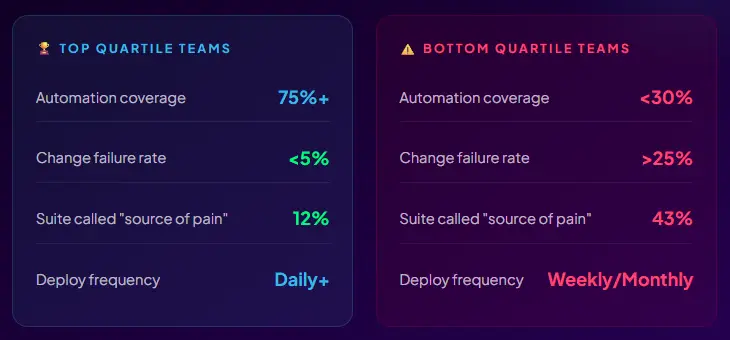

The root cause, according to the State of DevOps 2025, is not tooling. It’s discipline. Organiztions in the top quartile of software delivery performance automate over 75% of their testing and maintain change failure rates below 5%. The bottom quartile automates under 30% and experiences change failure rates above 25%. Both groups have access to the same frameworks. What separates them is whether they follow deliberate best practices or just write tests.

This blog is the distilled version of what the top quartile actually does differently. Not theory, production-tested practices from 2026 reality.

These aren’t textbook principles. They’re ranked by the frequency with which their absence causes real production incidents, wasted engineering time, or broken CI pipelines across teams we’ve worked with and research we’ve studied.

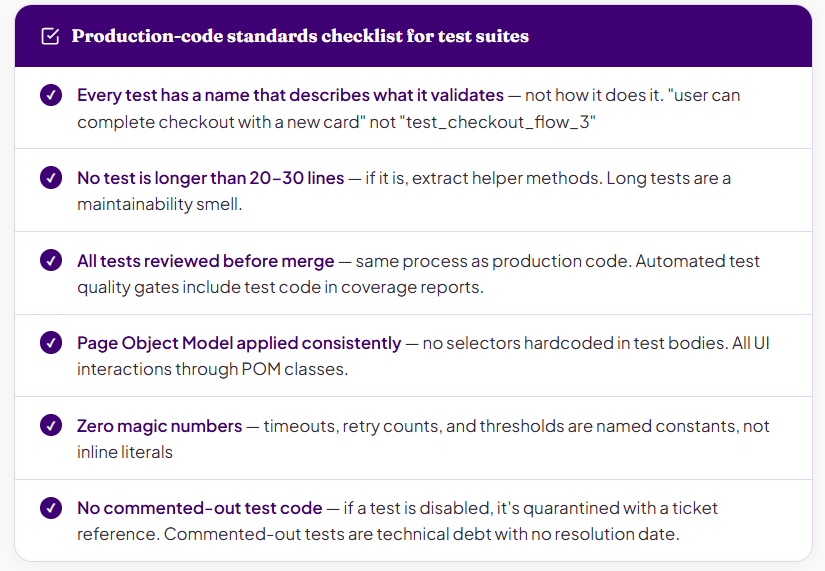

The single fastest predictor of whether an automation programme will survive two years is whether the team treats test code with the same engineering rigour as application code. Reviews, refactoring, naming conventions, no magic numbers, no copy-paste duplication.

This matters because every shortcut in test code compounds. A poorly named test file becomes a file nobody edits because nobody understands it. A duplicated selector becomes a two-hour maintenance task when the component changes. A magic number buried in a wait condition becomes an intermittent failure that costs 45 minutes to diagnose at 2 am during a release.

The Page Object Model (POM) is the structural expression of this principle for UI tests. One class per page or component, selectors centralised, no UI interaction logic scattered across test files. When a screen changes, you update one file, not 40 tests.

For API tests, the equivalent is shared client utilities and schema validators. Don’t repeat the HTTP client configuration in every test. Don’t inline expected response schemas. Abstract them or make them reusable, reviewable, and nameable.

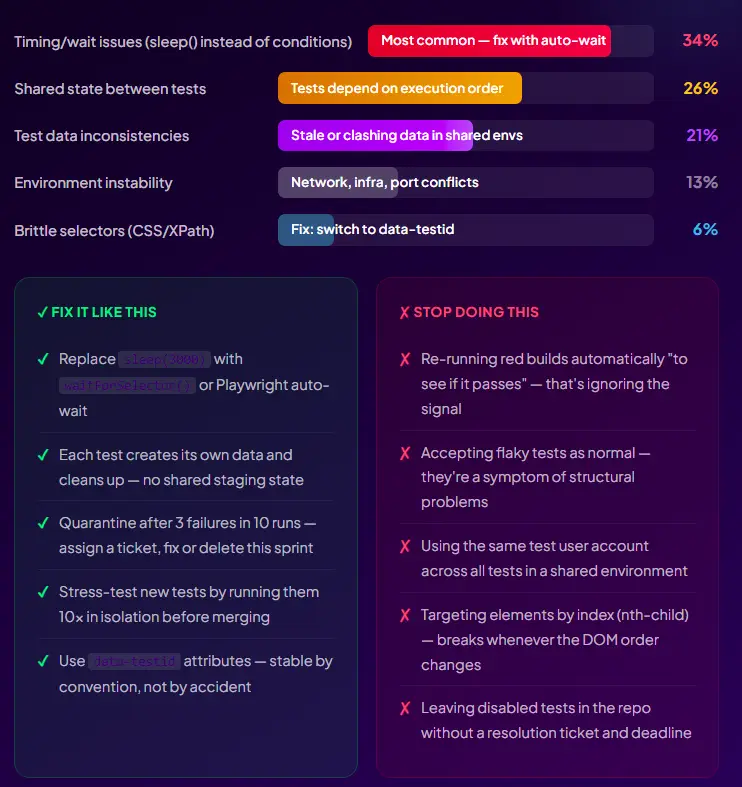

Flaky tests are the automation world’s most insidious problem because their damage is indirect. The test itself doesn’t fail your product; it fails your team’s confidence in the entire suite. When engineers learn to re-run a red build automatically before investigating, you’ve lost the feedback loop that makes automation valuable in the first place.

The data is clear: 15–30% of all automated test failures across the industry are flaky, not genuine product defects. In large suites, this translates to hundreds of false alerts per month, each requiring engineering time to investigate and dismiss.

Here’s a problem that rarely appears in “best practices” articles but shows up in almost every struggling automation programme: 44% of testing delays are caused by inadequate test data, according to the World Quality Report 2025. Not slow test execution. Not insufficient automation. Test data.

Teams invest heavily in CI/CD pipelines and shift-left testing, then find their pipelines sitting idle for days waiting for a DBA to provision usable test data. Or they clone production databases, creating privacy risks, and share a single staging environment across 12 application teams, creating contention, ordering dependencies, and flaky tests that flip depending on what another team ran last night.

Each test owns its data. Creates what it needs at the start, cleans up at the end. No reliance on data left by a previous test run or another team’s environment.

Never use real production data. Mask or synthesise everything. Only 4% of organisations have fully compliant development and test environments, according to K2view’s 2026 State of Enterprise Data Compliance survey – 76% have experienced a sensitive-data incident in lower environments.

Provision data programmatically. Manual DBA tickets for test data provisioning are a process anti-pattern. Automate provisioning via API or container snapshot. Target: data available in minutes, not days.

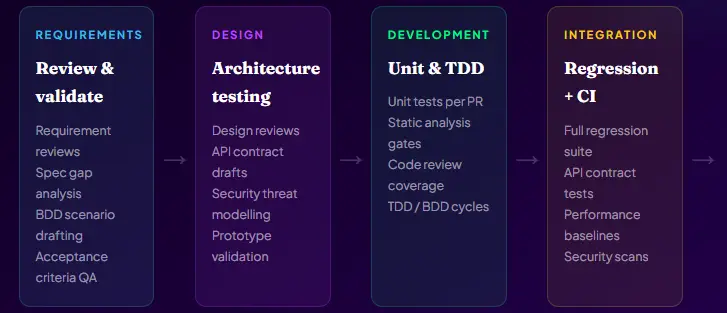

The shift-left principle has been discussed for decades, but in 2026, it has a sharper meaning. It’s not just “test earlier.” It’s “embed testing intelligence into every phase of the development lifecycle, starting from the moment a requirement is written.”

The economics are undeniable: a defect found during requirements review costs roughly 1× to fix. The same defect found in integration testing costs approximately 15×. In production: 100×. Every hour of shift-left investment returns somewhere between 10× and 100× in avoided production cost.

.

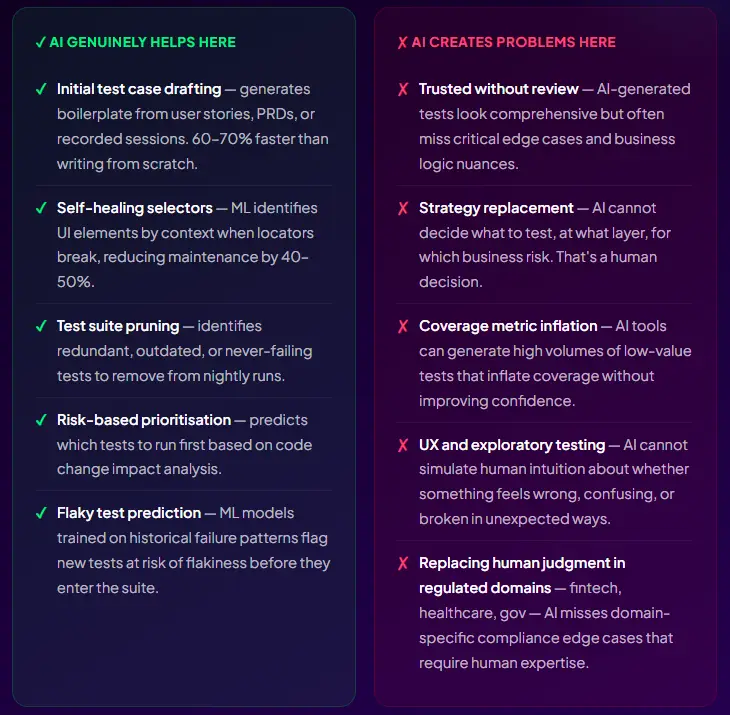

In 2026, 72% of QA professionals use AI for test generation and script optimisation, according to Katalon’s State of Quality Report 2025. But the usage patterns vary enormously between teams, seeing genuine ROI and teams adding AI-flavoured noise to their existing problems.

AI earns its place in test automation in specific, bounded contexts. Outside those contexts, it introduces false confidence and maintenance debt that compounds faster than the tests it generates.

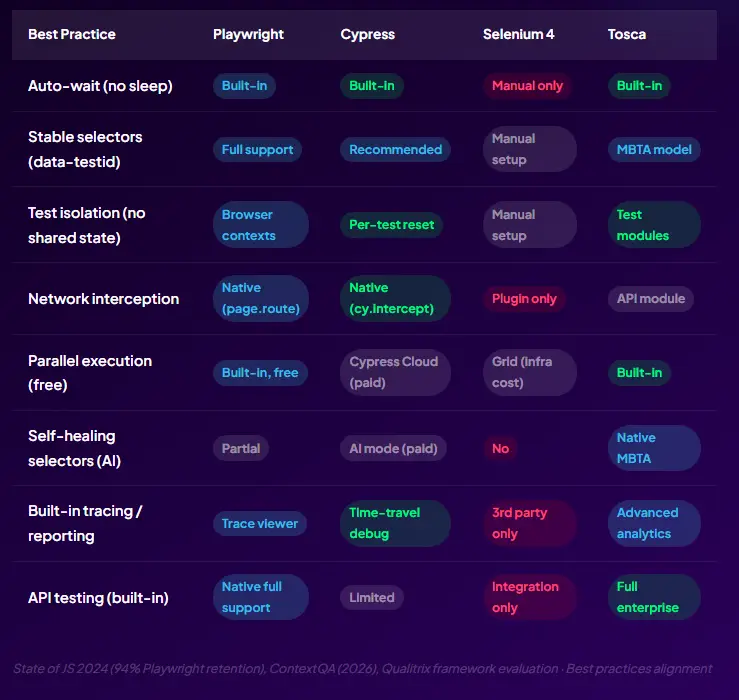

These practices are framework-agnostic in theory, but some tools make them dramatically easier to follow than others. Playwright’s auto-wait mechanism, for example, makes Practice 4 (no sleep()) the default; you’d have to actively fight the framework to introduce timing issues. That’s by design, and it’s part of why Playwright has achieved a 94% retention rate.

Every one of these 12 practices is individually valuable. But their real power is combinatorial. When test code is treated like production code, it’s maintainable. When it’s maintainable, engineers actually maintain it. When tests are maintained, the flaky rate drops. When the flaky rate drops, engineers trust failures. When engineers trust failures, CI gates become real gates. When CI gates are real, defects stop escaping to production. When the defect escape rate drops, release confidence goes up. When release confidence goes up, deployment frequency increases.

That compounding chain is what separates the top quartile from the rest. It doesn’t start with a better framework. It starts with treating test code like it matters.

Want a review of your automation suite against these practices? Book a free automation audit

A test automation plan that works is not a document you write once and file away. It’s a living product specification for your quality engineering capability. It answers the seven questions, allocates tests correctly across the pyramid, defines ownership, specifies what success looks like, and gets reviewed quarterly against reality.

The difference between the 27% of automation programmes that succeed and the 73% that fail is rarely talent or tooling. It’s whether someone sat down before writing the first test and answered.

Successfully led numerous startups and corporations through their digital transformation

Error: Contact form not found.

Error: Contact form not found.